The Three-Layer ROI Framework: Why Most AI Projects Fail Before They're Measured

Companies measuring AI ROI the wrong way kill good projects and overfund bad ones. Here's how operators at scale separate signal from noise.

A mid-market SaaS company deployed an AI agent to handle tier-one customer support tickets. The finance team ran projections before launch—labor savings were real, the payback looked solid. Three months in, they checked the ROI spreadsheet. It was underwater.

The agent resolved 38% of tickets, which should have been a win. But the cost per ticket was only marginally better than the human baseline. Implementation was still being amortized. On pure efficiency metrics, the project looked like a mistake.

Six months later, the CFO noticed something odd in the churn data: a 2.1 percentage point drop. Support response time had compressed from 12 hours to 8 minutes. Customers weren't just getting faster answers—they were escalating fewer issues because the AI was solving them the first time. When the team recalculated ROI with retention factored in, the project showed 340% annual return.

Advertisement

The math was always there. They just measured the wrong thing.

The Measurement Trap

Most AI ROI frameworks are borrowed from software implementation playbooks. Pick a cost center. Measure baseline. Measure post-implementation. Calculate savings. Divide by investment. Report to board.

This works fine when AI is purely tactical—automating data entry, compressing processing time. It fails completely when AI touches revenue, retention, or competitive positioning.

A manufacturing company deployed AI for production scheduling. The direct calculation was clean: 120 fewer labor hours per month, $400K in annual savings. But the real value was invisible in that math. Capacity utilization improved from 71% to 84%. That efficiency meant they could accept a new $8M contract without building new production lines. A traditional ROI model would have undervalued the project by 20x.

The deeper problem: when companies chase only direct ROI, they stop funding projects that are hardest to measure but most valuable. They avoid anything touching customer experience, market positioning, or competitive velocity. They become extremely efficient at optimizing what's already declining.

Advertisement

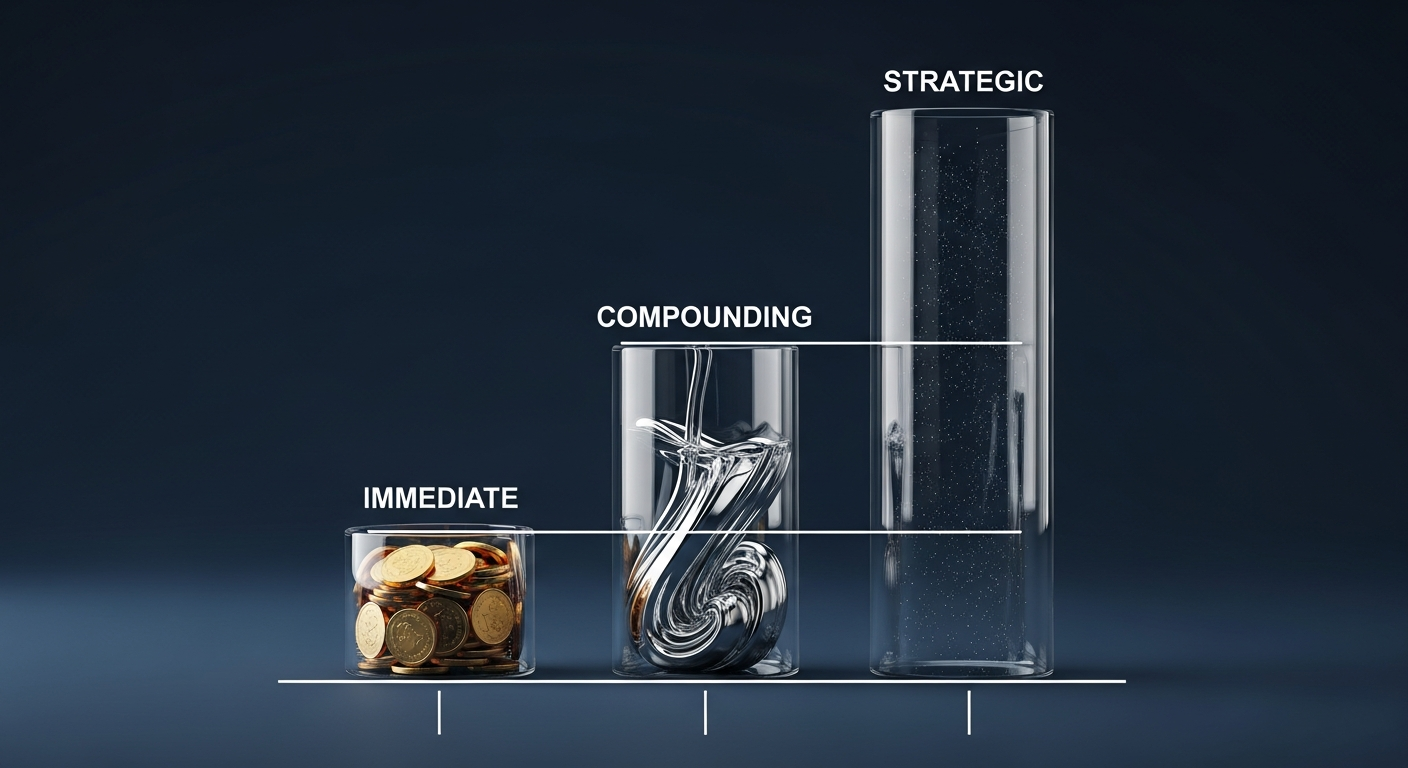

The Reframe: Measure in Strata, Not Totals

The companies capturing real AI value don't measure ROI. They measure ROI in three distinct layers, weight them differently, and report them separately to different parts of the organization.

This sounds like accounting theater. It's not. It's the difference between killing a $2M project that returned $8M and keeping a $180K project that returned $600K. Both matter. But they matter for different reasons, to different people, at different time horizons.

Layer One: Immediate Direct ROI (Days 0-60)

This is pure labor arbitrage. Cost avoidance. A document processing AI that cuts manual data entry from 40 hours weekly to 6 hours. You know the hourly cost. You know utilization. The math is mechanical.

A financial services firm deployed an AI contract analyzer in their legal department. Baseline: attorneys spending 15 hours per contract on initial review. Post-AI: 3 hours per contract. 200 contracts annually. That's 2,400 recovered billable hours. At $250/hour, that's $600K against a $180K annual software cost.

Layer One appears in the first 60 days. If it doesn't, your implementation is broken or your use case wasn't real. This is your kill gate. If you see no Layer One movement by day 60, you're not waiting for compound value to show up—you're throwing money at a bad bet.

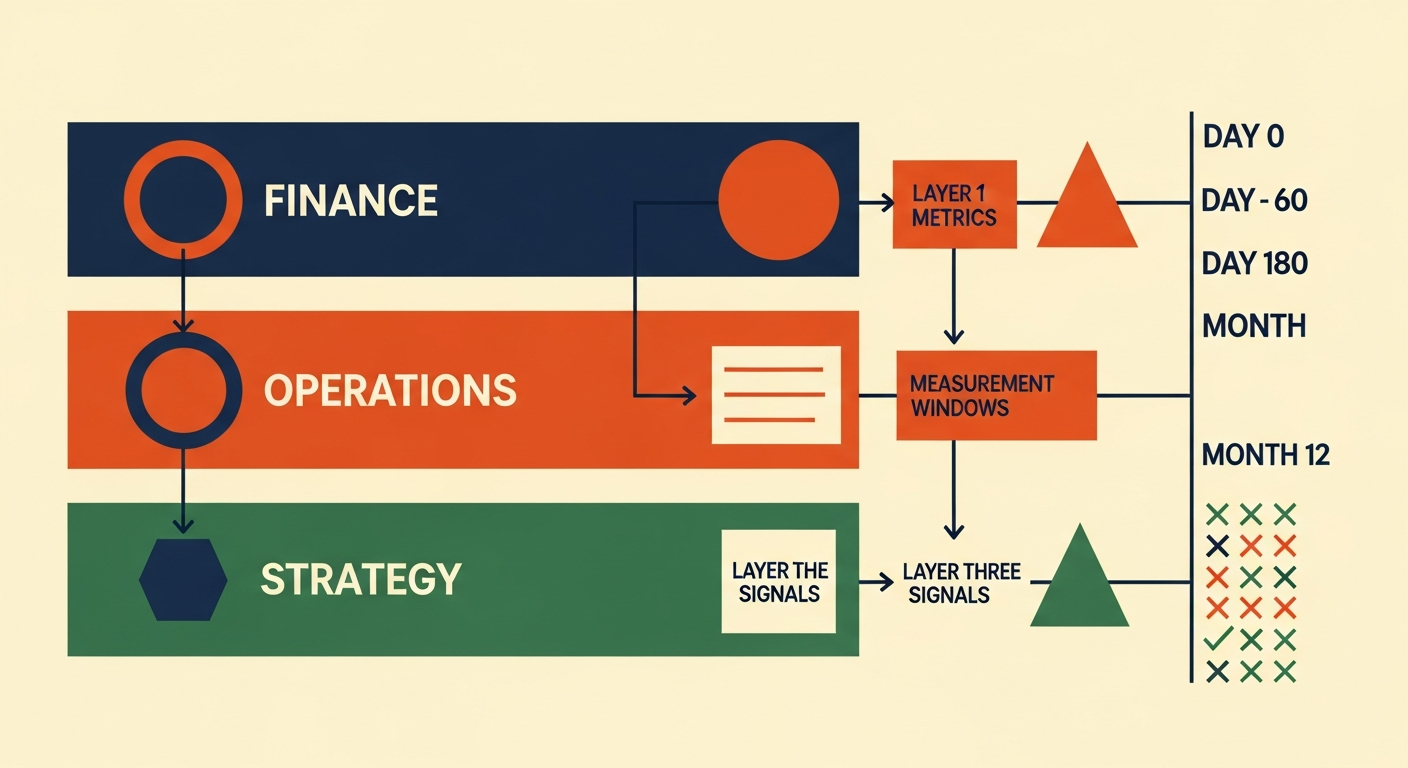

Report Layer One monthly to your CFO. It's the only metric finance truly understands.

Advertisement

Layer Two: Operational Compounding ROI (Days 90-180)

This is where the real money lives. And where most companies get blind.

The SaaS company's support agent shows this clearly. Direct savings: modest. Faster resolution times: measurable. But the secondary chain reaction—lower churn, higher customer lifetime value—was 5x the initial savings.

A D2C brand implemented AI pricing optimization across their catalog. Layer One: a few percentage points of margin lift from smarter pricing decisions. But the secondary effect hit harder. Dynamic discounts shown at cart drove lower abandonment. Better unit economics meant they could increase CAC spend. More customer acquisition drove growth rate higher. That growth rate improvement changed their Series B valuation before a single new customer paid full price.

Layer Two is difficult to isolate because correlation and causation blur. You need a control period or a pre/post window with external variables controlled. It takes 90-180 days to measure reliably. And it requires actual operational discipline—not a spreadsheet that auto-multiplies Layer One savings by an optimistic factor.

Where Layer One gets a monthly finance dashboard, Layer Two gets a quarterly operating review with a business owner who owns the actual metric: churn, throughput, defect rates, inventory turns. Not a derived calculation. The real thing.

Layer Three: Strategic ROI (The Invisible Layer)

An enterprise software company built an AI recommendation engine into their product. Layer One: zero labor cost reduction. The feature didn't replace anyone. Layer Two: modest operational gains.

But over 18 months, customers relying heavily on the recommendations had 40% higher retention. Those customers bought more seats. The feature made the product defensible against competitor churn. Suddenly the company's unit economics improved. Their CAC payback compressed. Their valuation multiple improved.

That's Layer Three. It doesn't appear on spreadsheets. It appears in NPS movement, customer concentration shift, deal velocity acceleration, and your best people's decision to stay or leave. It's the most valuable and the hardest to quantify.

A healthcare organization deployed AI for diagnostic support. It replaced zero FTEs. But clinicians using it reported higher confidence in borderline cases. Patient outcomes measurably improved. That became a differentiator in their market. Competitors couldn't claim the same clinical validation. Suddenly they could bid for higher-acuity contracts.

Layer Three gets tracked through leading indicators, not financial reports. It lives in board conversations and competitive win/loss analysis. You need a strategy person watching it, not a data analyst.

The Operator Playbook

- Before launch, write down your hypothesis for all three layers. What's the Layer One target? How will you measure Layer Two effects? What's the leading indicator suggesting Layer Three is working? This isn't optional. It's your forcing function against wishful thinking.

- Assign three different owners with three different cadences. Finance owns Layer One, reported monthly. Operations owns Layer Two, reported quarterly. Strategy owns Layer Three, brought to board meetings quarterly. Unify all three in one person and none of them get the attention they need.

- Set a hard Layer One gate at day 60. If you're not seeing direct ROI movement by then, you either have an execution problem or a bad use case. Fix it or kill it. Don't wait for 'compounding benefits' to justify a broken foundation.

- Build Layer Two tracking into your monthly operating rhythm. Create a before/after dashboard for the actual business metric (churn, response time, throughput, defect rate). Show the trend line. Add a written explanation of what changed. Most of your real value lives here. If you're not tracking it monthly, you're flying blind.

- Report the three layers separately. Your CFO sees Layer One and Layer Two—that's the efficiency story. Your CEO sees all three with equal narrative weight. Your board sees a translation of technical gains into strategic outcomes. Don't collapse all three into one 'total ROI' number. That number is a lie.

- Resist the urge to math-stack all benefits into Layer One. You'll be tempted. Showing a bigger direct ROI by including secondary effects is seductive. You'll lose all credibility when the math breaks or Layer Two doesn't materialize. Keep them separate or you can't diagnose what's working.

- Run a portfolio review every quarter. Rank all active AI initiatives by Layer One payback speed, Layer Two magnitude, and Layer Three strategic contribution. This forces discipline about which projects get funding next and which get sunset.

The Portfolio Discipline Test

Here's what separates the operators capturing real AI ROI from those losing money on it: they measure rigorously enough to explain why each project works, then they fix projects that aren't. They don't declare victory on metrics that feel good. They also don't kill projects because Layer One looks weak if Layer Two and Three are genuinely compelling.

The companies failing at AI ROI do one of two things. They measure so narrowly (Layer One only) that they starve genuinely valuable projects of capital. Or they measure so loosely (aggregating all benefits into one magic number) that they can't actually tell if anything is working, so they fund projects based on who shouted loudest.

The difference in three-year outcomes between these two patterns is typically a factor of 3-5x in total AI value captured.

The Real Test: Can You Explain What Broke?

If a project underperforms, you should be able to pinpoint exactly where. Is Layer One tracking below target? Then you have an execution problem—the AI or the process isn't working. Fix the implementation.

Is Layer One solid but Layer Two isn't compounding? Then the secondary effects aren't materializing. Maybe your team isn't actually using the output. Maybe the metric you're trying to move isn't actually sensitive to this input. That's a strategy problem, not a product problem.

Is Layer One and Layer Two working but Layer Three signals aren't moving? Then you've built an efficient tool that hasn't changed competitive positioning. That's valuable but finite. Plan for that ceiling.

If you can't explain which layer broke, you don't have enough measurement discipline. Add more. The companies winning at AI ROI aren't the ones with the fanciest AI. They're the ones who measure carefully enough to know exactly what's working and what's not.

Weekly Newsletter

AI Adoption Weekly

Join operators learning how companies actually deploy AI. No hype — just real implementation intelligence.

No spam. Unsubscribe anytime.

Related Comparisons

Free Download

AI ROI Calculator

Quantify AI investment returns. Built for ops leaders presenting to the board.