The AI Stack Every Lean SaaS Team Actually Needs in 2026

A Series A fintech founder shipped in six months what a Series B competitor promised in Q2. The difference wasn't talent or capital—it was how they stacked AI into existing workflows.

Six months ago, a Series A fintech founder with eight employees told me she'd just shipped a feature her competitor—a Series B company with 40 people—had promised for Q2. She didn't have an AI researcher. She didn't have a data engineering team. She had Claude, Zapier, and a ruthless focus on where AI actually moved the needle.

I asked her competitor's CTO what went wrong. His answer: they'd spent three months evaluating fine-tuned models, building internal infrastructure, setting up experiment tracking. They had better AI talent. More capital. Better intentions. And they lost the race.

The gap wasn't philosophical. It was architectural. One team had built a stack. The other had tried to build a platform. One shipped. One didn't.

Advertisement

The Mistake: Confusing Narrow with Simple

Most founders approach AI adoption the way they approach hiring: ask "who is best?" then try to plug it everywhere. They buy enterprise LLM APIs, spin up vector databases, hire a machine learning engineer, and suddenly they're managing infrastructure instead of a business.

The actual problem is narrower. Your support team spends 40% of its time routing and categorizing tickets instead of solving problems. Your content operation drowns in first-draft work. Your sales reps chase low-probability leads because they lack qualification signals.

But here's where founders get stuck: they see a narrow problem and assume it needs a simple solution. One tool. Point it at the problem. Ship it. Six weeks later they've optimized the wrong function, chosen a tool that creates downstream work, or built something that breaks when the product changes.

The mistake isn't AI. It's thinking linearly about a fundamentally sequential problem.

The Reframe: Stack, Not Tool

Teams winning right now think in stacks, not tools. A stack is a sequence of four connected decisions: where does the work actually live? What transformation does it need? Where should the output land? How does it feed back into your metrics?

Advertisement

Pick wrong at any point and the whole thing breaks—it costs too much, fails in production, or nobody actually uses it.

The fintech founder's actual stack was shockingly simple: product (Stripe) → Claude API → internal database → metrics dashboard. Four components. Three were already running anyway. She wasn't inventing—she was connecting what already existed.

The Four-Layer Pattern

1. Ingest: Where Work Actually Lives

Your work is already in a system. Zendesk. Salesforce. Linear. Notion. Your product database. The first layer's job is honest: don't move work. Move data about work.

A support team doesn't rewrite their entire workflow. Zendesk feeds event data to the next layer. That's it. One fintech company pipes Stripe webhook events directly to Claude and gets back a fraud risk assessment. That assessment writes back to a metadata field. No one changes how they work. The system got smarter.

The teams that move work migrate complexity. Custom data pipelines. New tools. Broken SOPs. The fastest teams I've tracked extract data and leave the workflow alone.

2. Reason: The Transformation

This is where AI does actual work. You're transforming raw data into structured output: extract entities from support tickets, categorize leads by revenue probability, draft responses, flag anomalies. The model doesn't need to be perfect—it needs to be good enough that the output is useful and the error rate is acceptable.

Advertisement

The mistake is waiting for perfection. Teams spend weeks prompt engineering, building evaluation sets, measuring baseline accuracy. Meanwhile their competitor shipped a Claude integration in four days, accepted that 15% of outputs would need review, and iterated twice.

Current APIs (Claude 3.5, GPT-4, open-source models on Modal or Together) mean you don't fine-tune anything. Use a base model with strong prompting. Measure what breaks in production. Fix the prompt. Ship the next version.

3. Route: The Output Staging Ground

Raw model output almost never goes directly to production. It lands somewhere safe first—somewhere humans can review it, systems can act on it, and corrections surface quickly.

A support team doesn't let Claude reply to customers directly. Claude drafts a response. It lands in a Slack thread. The agent reviews it in 10 seconds, clicks approve or edits, and only then does it send. That agent goes from 60 tickets to 90. The model doesn't need to be perfect because humans are still in the loop—but the human isn't starting from blank.

Tools for this layer: Zapier, Make, n8n for lightweight automation. Your own application logic for custom routing. A Slack channel for anything requiring human eyes. The point is speed—get the output visible and actionable within seconds.

4. Measure: The Feedback Loop That Prevents Decay

This is where most stacks break. Teams ship AI, feel accomplished, and never measure what actually changed. Did support resolution time improve? Did reps close more deals? Did content output increase? Or did you just push work sideways?

A growth-stage SaaS company deployed AI lead scoring in their sales process. It looked perfect—higher confidence leads routed to top reps. After six weeks, actual conversion rates hadn't moved. They'd optimized a bottleneck that wasn't a bottleneck. They shut the project down and redirected the time elsewhere.

Lean teams measure obsessively: tickets closed per day, email response time, draft-to-publish cycle time, conversion rate. Pick one metric. Measure it for two weeks before deploying AI. Measure it weekly after. If it doesn't move in a month, reassess.

Monday Morning: How to Build This

- Identify one function where you lose 40%+ of time to a repeatable task. Support routing. Lead qualification. Content first drafts. Be specific.

- Map the current flow: where does the data live (tool name, field names), who decides on it, where does the output go. If you can't answer this clearly, you've found the wrong bottleneck.

- Pick your transformation model: Claude via API is the safest start. Costs are predictable. Quality is consistent. If you're processing 1000s of requests daily, evaluate Together or Modal for cost. Otherwise, use Claude.

- Build the staging ground: Slack channel for human review, database table with a flag field, or a queue in your existing tool. You're adding one decision point between model output and action.

- Set the metric before you deploy: current tickets per agent per day, current lead-to-pipeline conversion rate, current draft-to-publish cycle time. Document it. Measure it for two weeks.

- Ship the simplest version: one prompt, one model, one routing rule. Get it in front of users. You'll learn more in a week of production use than in a month of planning.

What Actually Separates Winners from Everyone Else

The teams winning aren't smarter. They're not buying better tools. The difference is that they see AI as infrastructure, not magic. They connect existing systems. They measure impact. They iterate fast. And critically: they know that the stack degrades the moment you stop measuring.

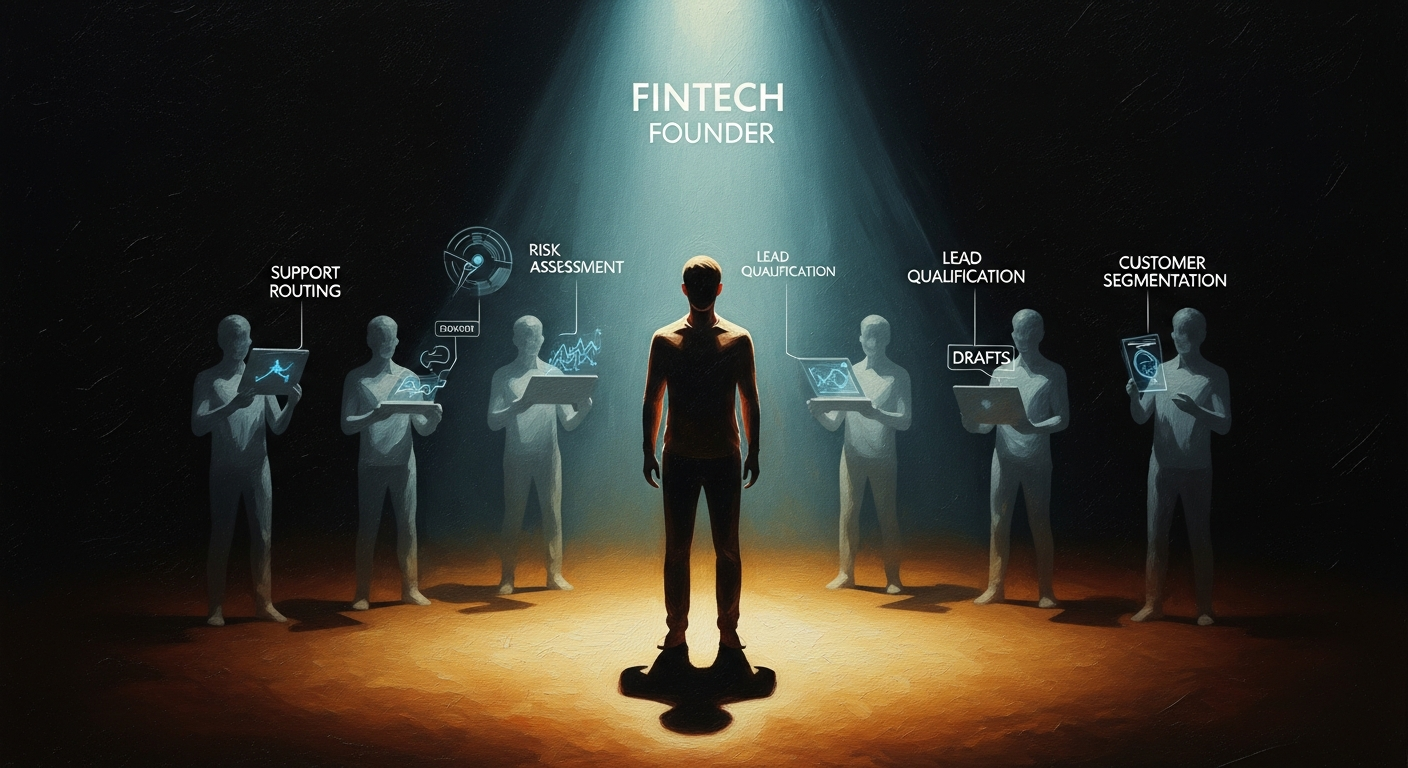

The fintech founder has now deployed that same stack to five functions: risk assessment, lead qualification, support routing, fraud detection, onboarding copy. She hasn't hired an AI person. No data team. She has a repeatable process: identify bottleneck → ingest data → add transformation → route output → measure impact. She's run it five times. Four worked. One didn't, so she shut it down.

That's the actual state of AI adoption in lean teams right now. Not perfect. Not sophisticated. Pragmatic. Fast. And measurably profitable.

The difference between winning and losing in AI adoption in 2026 isn't who has the smartest model or the most capital—it's who treats AI as a sequence of connected decisions and who builds in the feedback loop that keeps it from breaking.

Weekly Newsletter

AI Adoption Weekly

Join operators learning how companies actually deploy AI. No hype — just real implementation intelligence.

No spam. Unsubscribe anytime.

Related Comparisons

Free Download

AI ROI Calculator

Quantify AI investment returns. Built for ops leaders presenting to the board.