ChatGPT vs Claude: The Decision Nobody Gets Right

Most teams benchmark model capability and pick wrong. The real decision isn't which model is smarter—it's which one your team will actually use without friction.

A fintech founder watched her team's support automation project stall six months after launch. They'd spent weeks on a rigorous evaluation: response quality, latency, token efficiency, cost per query at scale. Both models tested nearly identical. Both cost roughly the same. The decision felt straightforward.

But something was wrong. Manual ticket writing hadn't decreased. Adoption was stuck at 12%. When she dug into what was actually happening on her support team's machines, she discovered the problem had nothing to do with model intelligence. The chosen tool didn't plug into their existing ticketing system. The other one had a native Zapier integration that made the workflow frictionless. They switched. Three weeks later, adoption was 87%.

The model didn't change. The capability didn't change. The friction changed. And everything followed from that.

Advertisement

The Hidden Problem: Capability Theater

Most evaluation processes measure the wrong thing. They run structured benchmarks. They test edge cases. They conclude something like: "Claude wins on reasoning, ChatGPT wins on speed." This is technically accurate and operationally useless.

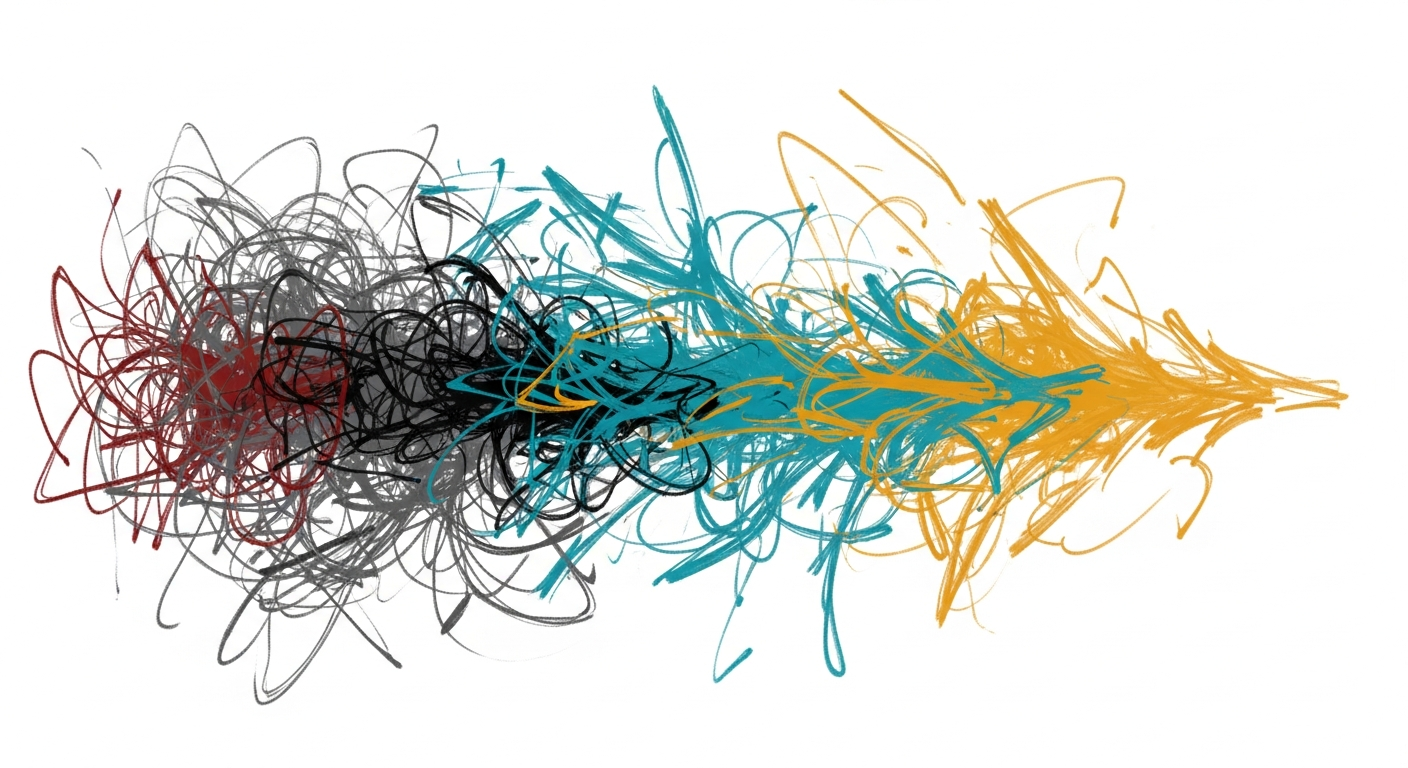

The capability gap between modern models has compressed to a point where both handle the vast majority of business work—customer support, content generation, code review, data extraction, research synthesis. Your actual bottleneck stopped being model intelligence years ago. It's adoption. It's whether your support team reaches for the tool without thinking, or whether it requires an extra step and gets abandoned.

This creates uncomfortable pressure on decision-makers because it means the answer isn't elegant. It's not a clear technical victory that you can defend in a meeting. It's messy, contextual, and depends entirely on your infrastructure, your team's existing habits, and your integration points. Most teams can't accept that. So they keep looking for the elegant answer. And they pick wrong.

The Reframe: Adoption Is the Only Differentiator

The right model to pick is the one your team will reach for without thinking. Everything else follows from that.

A content operations team at a B2B SaaS company was using Claude via the web interface for editing and fact-checking. It worked. But they were losing 45 minutes per day to context switching—open document, switch to Claude, write down the note, switch back. When they evaluated ChatGPT, they found it had a native Google Docs plugin. They could stay in the document. The model didn't change. The output quality didn't change. But switching dropped their context-switching time to near zero and increased throughput 30%.

Advertisement

This is what actually matters. Not the model. The friction.

The Decision Tree

The real evaluation process starts with three diagnostic questions about your actual operations. Your answers determine which model wins for you.

Question 1: Where Does the Work Actually Happen?

If your team lives in Slack, you want the model with the best Slack integration. If engineers are building in VS Code, you want native editor presence. If your workflows are orchestrated through Zapier, you want the richest automation connectors. If your design team works in Figma, you want the model that integrates there.

Don't ask: "Which model is better?" Ask: "Where is my team already working?" Then check which model has native presence there. That's your first decision point.

Question 2: What's Your Integration Pattern?

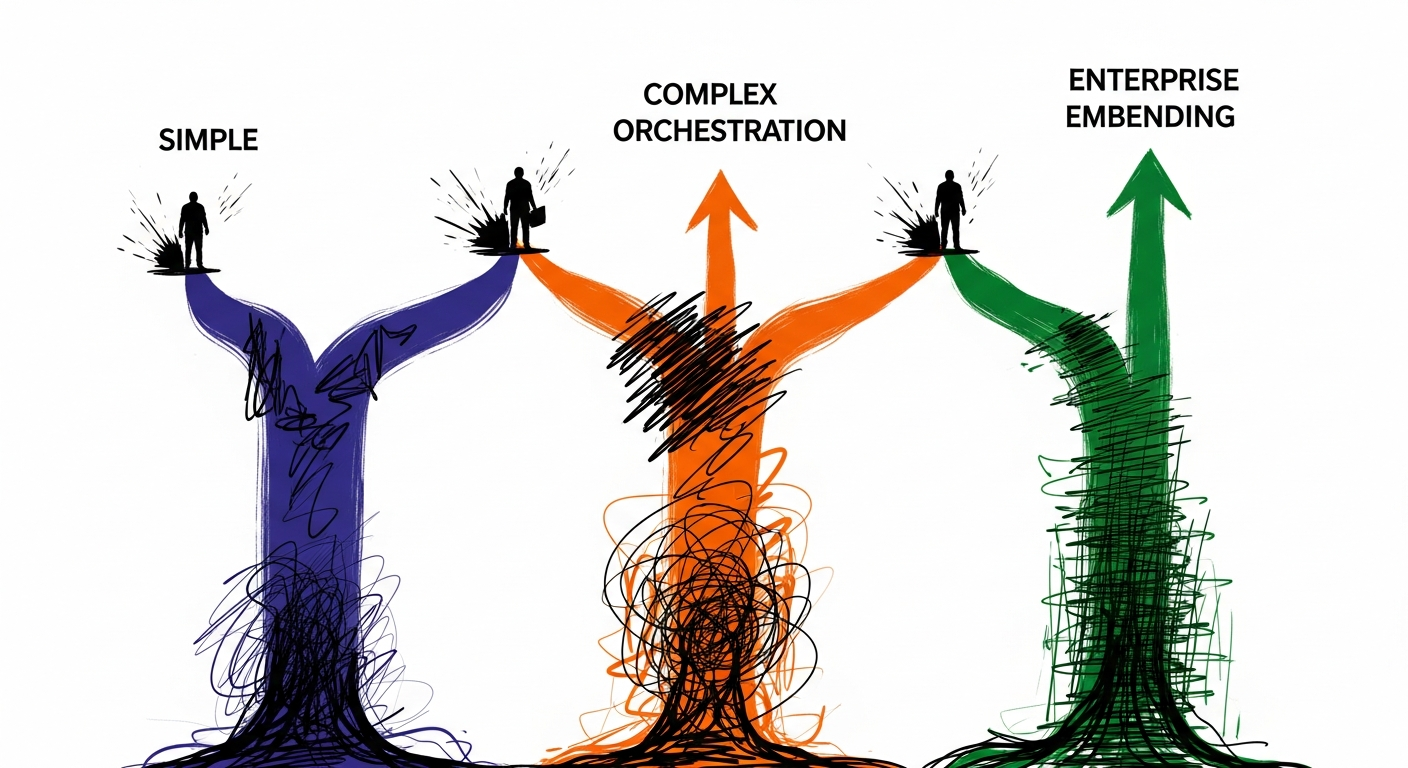

This splits into three buckets with different decision rules:

- Simple, standalone features (chatbots, email drafting, one-off analysis). Both models work identically via API. Friction here is negligible. Flip a coin or defer to question 3.

- Complex orchestration (multiple models, conditional logic, chains). You'll care about API reliability, error-handling behavior, rate-limit transparency. Spend a week testing both in your actual environment. Measure where you hit friction. That data decides.

- Enterprise embedding (sitting inside your product). Latency, cost per token, and vendor stability matter. You're betting on their roadmap. Have a longer vendor conversation. This is a relationship decision, not just a technical one.

Question 3: What's Your Team's Existing Mental Model?

If your team has been using ChatGPT since 2022, they have years of intuition built up. They know how to prompt it. They know what to expect. They have workarounds for its quirks. Switching to Claude means asking them to rebuild that intuition from scratch.

Advertisement

A research team switched from GPT-4 to Claude because benchmarks suggested better performance. For the first month, output quality actually declined. The team was prompting Claude like they prompted GPT-4. Claude prefers different instruction patterns. Once they adapted, Claude was better. But the adaptation cost real time and real friction.

Don't let this stop you from switching if the technical case is strong. But understand the cost. Include it in your timeline. Give the team two weeks to recalibrate before declaring victory or failure.

What Actually Differentiates Them

ChatGPT's Real Advantages

- Deeper ecosystem integration. More third-party tools have native connectors. Zapier, Make, Slack, Google Workspace, VS Code—most of them built ChatGPT support first.

- Installed base. More of your team members have personal experience with it, so the mental model cost is lower.

- Proven operational stability at massive scale. If you're running millions of queries monthly and reliability is measured in nines, you're paying for proven track record.

Claude's Real Advantages

- Larger context window. If your workflows involve long documents, entire codebases, or complex research materials, Claude's 200K window solves a problem ChatGPT requires you to work around.

- Instruction-following precision. Claude tends to follow nuanced instructions more reliably. You need fewer prompt iterations for exact output formatting or complex conditional logic. This compounds over thousands of queries.

- Different strengths on certain reasoning tasks. If you're doing research synthesis, code explanation, or multi-step analysis, Claude's performance often justifies the switch—if the integration friction is low.

The Operator Playbook: Monday-Morning Actions

Here's what to do this week:

- Map where your team actually works. Not where you think they work. Where they actually spend their time. Chat logs, Slack, Figma, Notion, VS Code, Google Docs. Audit the top five tools.

- Check native integrations for both models in those five tools. Document what exists and what doesn't. Friction data beats capability data.

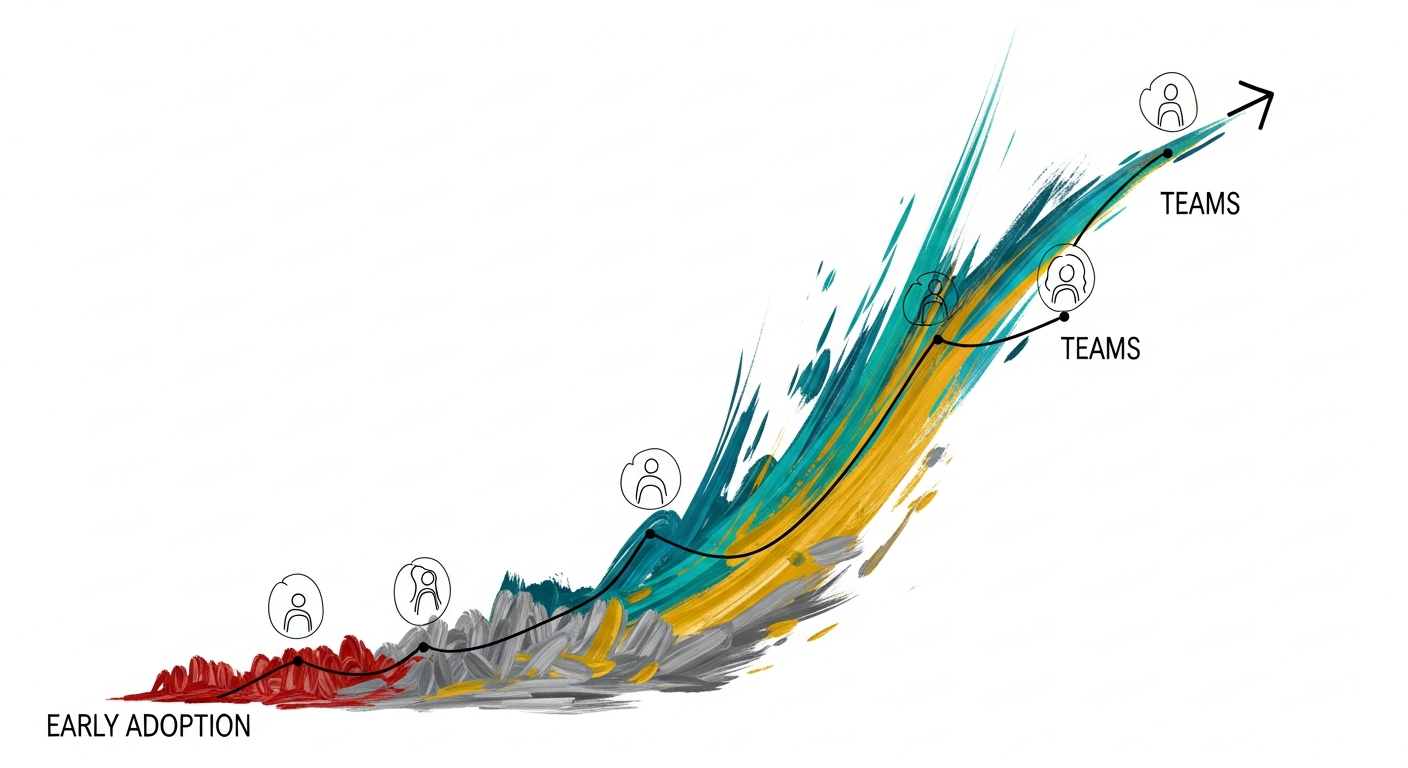

- If you're already using one model, measure adoption. What percentage of your team uses it monthly? Weekly? Daily? Get a baseline. This is your comparison metric.

- Run the three questions above for your specific context. You should be able to answer each in 30 minutes of actual ops investigation.

- If questions 1 and 2 point clearly in one direction, go with that. If they conflict or you're genuinely unsure, spend one week testing both in your actual environment. Measure adoption friction, not capability.

- If you decide to switch from what you're using, give the team two weeks to recalibrate before measuring success. Don't declare failure on day three.

The Strategic Observation

The companies that win with AI aren't the ones that pick the smartest model. They're the ones that pick the model their team will actually use, then obsess over integration. They understand a hard truth: a mediocre model that 90% of your team uses beats a brilliant model that 10% of your team uses.

They measure success not by benchmark scores but by adoption curves and time actually saved. In an environment where capability is table stakes, adoption is the only differentiator that matters. And adoption is a problem that lives almost entirely outside the model.

Weekly Newsletter

AI Adoption Weekly

Join operators learning how companies actually deploy AI. No hype — just real implementation intelligence.

No spam. Unsubscribe anytime.

Related Comparisons

Free Download

AI ROI Calculator

Quantify AI investment returns. Built for ops leaders presenting to the board.